Across Cleveland, Atlanta, and Los Angeles, the fight over AI in policing is no longer an abstract debate about innovation; it is a test of who controls public data, who gets watched, and who is left to trust institutions that often ask for blind faith while offering limited transparency. In the Trump administration era, as federal oversight of local police has been scaled back, cities have leaned harder on private surveillance systems like Flock Safety even as residents, civil-rights advocates, and some officials warn that the same tools marketed as crime-fighting infrastructure can expand surveillance faster than accountability.

The policy backdrop

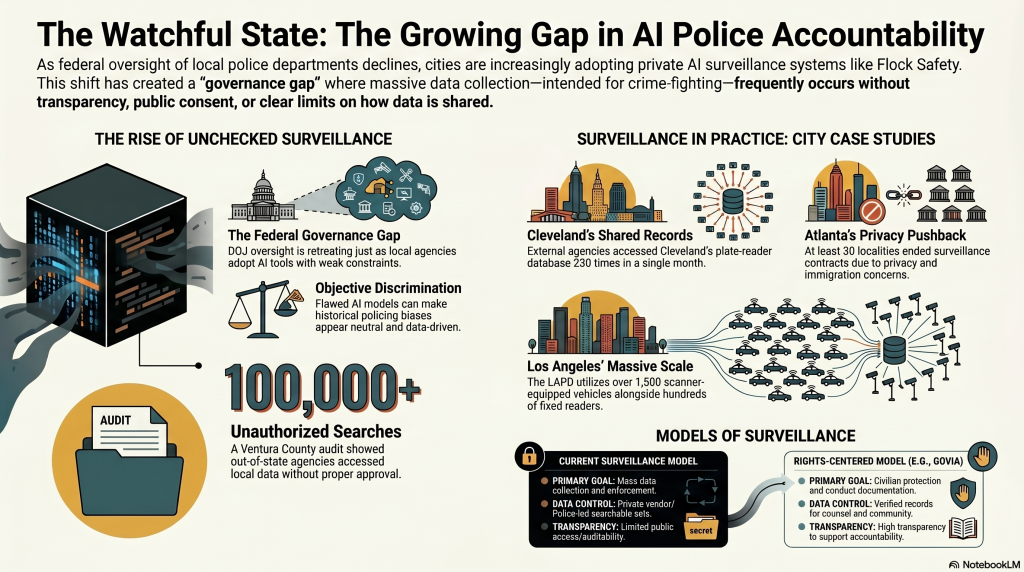

The federal posture matters because DOJ oversight has historically been one of the few external checks on local policing patterns and practices. Reuters reported that the Trump administration ended efforts to secure oversight agreements for police departments in Minneapolis and Louisville in 2025, and later reporting said the Civil Rights Division had sharply reduced its investigative capacity under the second Trump administration. That retreat lands at the exact moment local agencies are adopting more AI-enabled systems, creating a governance gap where the fastest-moving technologies face the weakest public constraints.

Why AI in justice is different

AI in criminal justice is not just a software question; it can shape arrests, searches, bail decisions, sentencing recommendations, and surveillance priorities, all of which touch liberty interests. Experts and advocacy groups warn that these tools can magnify existing bias because they are trained on historical data produced by systems already marked by unequal policing. The core problem is not simply error; it is that a flawed model can make discrimination appear objective, which can narrow due process while widening official confidence.

Cleveland: convenience and control

Cleveland offers a vivid case study in how quickly surveillance infrastructure becomes normalized. Signal Cleveland reported that the city purchased 100 Flock license plate readers in 2022 for $250,000, and that in January 2026 law enforcement from Northeast Ohio and beyond accessed Cleveland’s plate-reader database about 230 times. The same report noted that Cleveland participates in nationwide and statewide lookup functions, allowing other agencies to check whether a plate was detected without prior approval, while the system stores images beyond just hot-list vehicles. That means the public is not simply funding crime-fighting cameras; it is also underwriting a searchable record of ordinary movement.

Atlanta: the company’s home city, the country’s friction point

Atlanta sits at the center of the Flock debate because the company is based there, and the region has become a proving ground for how local governments defend surveillance as public safety while residents push back on privacy grounds. WABE reported that at least 30 localities have deactivated or ended relationships with Flock since early 2025 amid immigration-surveillance concerns. In Dunwoody, the company responded by emphasizing that customers own the images, that the data is not sold, and that contract language governs use, but critics argue that ownership on paper does not erase the power imbalance created when local police control vast searchable datasets.

Los Angeles: scale without clear limits

Los Angeles shows the sheer size of the ecosystem. The Los Angeles Times reported that the city’s Bureau of Street Lighting had mounted 324 plate readers over five years, while the LAPD said it had 1,500 police vehicles equipped with scanners and access to an additional 280 plate readers in fixed locations, about 120 of them Flock devices. LA officials have also started asking harder questions about storage and sharing after concerns that federal agencies may be using Flock data in immigration enforcement. That matters because scale changes the constitutional and civic stakes: once a city has normalized ubiquitous capture, the line between targeted investigation and generalized monitoring starts to blur.

The trust problem

This is why the public argument around Flock has become so charged. Residents in places like Cleveland and Dunwoody are not only asking whether the technology works; they are asking whether police departments can verify vendor claims, audit access, and discipline misuse when outside agencies search local data. CBS News reported an audit in Ventura County showing out-of-state agencies accessed local Flock data hundreds of thousands of times without approval, a case that crystallized fears that “sharing” can become functionally borderless once technical controls are weak or misconfigured. In that environment, the issue is not whether the system can help solve cases; it is whether it creates a surveillance commons with too little democratic consent.

What fairness requires

A fairer model would require public impact assessments, independent audits, meaningful human oversight, strict access logs, and clear limits on secondary use, especially for immigration or reproductive-health investigations. It would also require agencies to prove necessity, not just utility, and to disclose how often the tools actually change outcomes in cases rather than merely expanding the volume of data available to investigators. Without those safeguards, AI-assisted policing risks becoming a machine that accelerates the very inequalities it claims to manage.

Where GoVia fits

GoVia Highlight A Hero can be positioned as the counter-model: a rights-centered platform that protects civilians during police encounters while documenting conduct in ways that can support attorneys, community accountability, and officer recognition without turning public safety into a pure enforcement metric. Its strongest use case is not replacing police judgment, but creating a verified record when encounters go sideways, then routing that record to counsel, oversight, and community review with tighter privacy controls than conventional surveillance stacks. That makes it potentially valuable in a world where residents increasingly distrust black-box policing tools and want systems that preserve both safety and dignity.

A sharper civic choice

The real contrast is not “AI or no AI.” It is whether cities use AI to concentrate power or to constrain it. In Cleveland, Atlanta, and Los Angeles, the current model often gives the public more cameras, more data, and fewer answers; the better model would give people more protection, more transparency, and a genuine way to challenge misuse.

If you want, I can turn this into a polished op-ed-style article, a newsroom feature with subheads and a stronger lede, or a version tailored for GoVia Highlight A Hero’s website.