A Joint Investigative Report –

In a suburban Virginia bank, a man with a bucket hat and a smartphone walks out with $195,000. He is caught not by a witness, but by a Google database he never knew existed. In Florida, a hit-and-run driver is undone by her own Ford—the vehicle’s 911 Assist dialing police as she fled. In Washington, D.C., a drug dealer’s 28-day GPS trail becomes the rope that hangs him.

These are not anomalies. They are the architecture of what law professor Andrew Guthrie Ferguson, in his forthcoming book Your Data Will Be Used Against You, terms “sensorveillance” —the quiet, systematic weaponization of the Internet of Things (IoT) against the very citizens who purchased it.

But in the shadow of this investigative exposé, a new narrative is being aggressively marketed to police departments and city councils across the nation. It comes packaged in a sleek user interface and promises a different future. Its name is GoVia.

Billed as “Your Holiday Hero and Community Police Safety App,” GoVia positions itself as the antidote to the dystopia Ferguson describes. It claims to offer transparency, community oversight, and a “privacy-first” bridge between citizens and law enforcement.

This investigation examines the central tension of our era: Can a surveillance tool ever truly liberate us from surveillance? Or is GoVia merely the latest—and most palatable—iteration of a system designed to turn our devices against us?

—

Part I: The Panopticon in Your Pocket

To understand the challenge GoVia faces, one must first understand the landscape Ferguson lays bare.

The excerpt from Your Data Will Be Used Against You reveals a legal and technological architecture that has rendered the Fourth Amendment—the constitutional safeguard against unreasonable searches—porous to the point of irrelevance. The mechanisms are no longer hidden; they are embedded in the functionality of daily life.

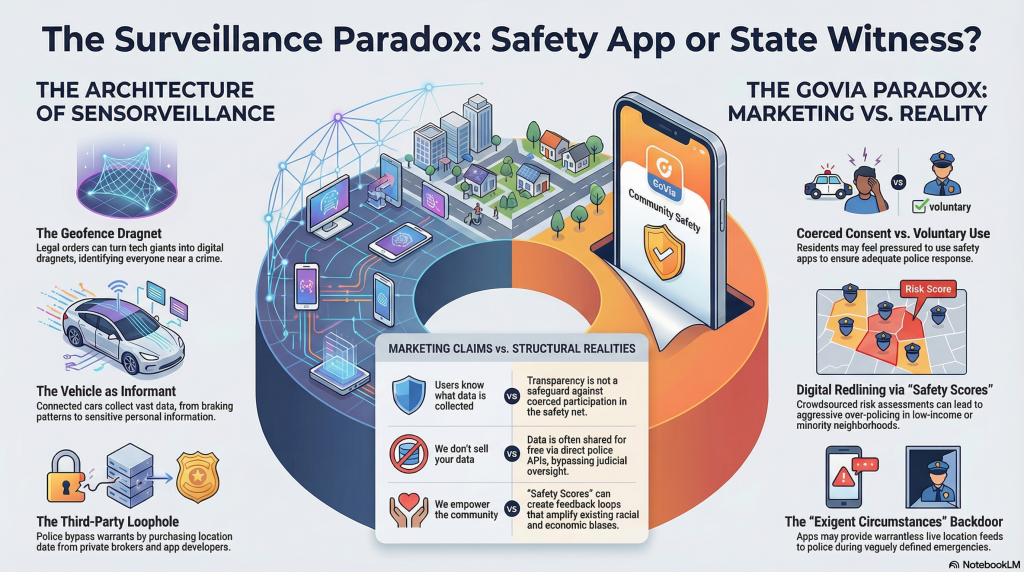

The Rise of Geofence Warrants

The case of Okelle Chatrie is instructive. Police did not need to suspect Chatrie to find him; they merely needed to suspect someone. A geofence warrant—a legal order demanding Google hand over data on every device within a 150-meter radius of the crime scene—turned the tech giant into a digital dragnet. Google’s now-defunct (or “localized”) Sensorvault was, for years, the ultimate silent witness, capable of identifying strangers based solely on proximity to a crime.

The Vehicle as Informant

Cathy Bernstein’s awkward conversation with a 911 operator, as transcribed in the book, highlights the “automated informant.” Modern vehicles, as Ferguson notes, collect far more than speed and braking data. Nissan’s privacy policies reserve the right to collect data on “sexual activity” and “genetic [data],” selling it to data brokers—or law enforcement—without a warrant. The car, once a private sphere, is now a roving state witness.

The Third-Party Loophole

Perhaps the most destabilizing revelation in Ferguson’s work is the legal loophole of purchased data. As the Supreme Court began to close the door on warrantless tracking (as in Carpenter v. United States in 2018, which ruled that accessing cell-site location information is a search), agencies simply started buying the data. If a company like Venntel or Fog Data Science collects location data from your weather app or game, and the police buy it, the Fourth Amendment’s warrant requirement is often bypassed entirely. The logic is brutal: you gave it to a third party; it is no longer “yours.”

It is into this legal and ethical maelstrom that GoVia has launched its flagship product.

—

Part II: GoVia – The “Holiday Hero” Narrative

GoVia’s marketing materials, obtained by this collaborative investigation, present a sharp contrast to the dystopian tone of Ferguson’s scholarship. The app is described as a “community-centric safety ecosystem.” It allows users to voluntarily share their location with a “trusted circle” of neighbors and local law enforcement during high-risk periods—such as holiday travel, late-night commutes, or neighborhood watch patrols.

A promotional video obtained from a municipal sales pitch frames the app as an act of empowerment: “Don’t let your data be used against you. Let it protect for you.”

GoVia’s architecture relies on opt-in, time-limited surveillance. Unlike the Google Sensorvault, which vacuumed data continuously without user consent, GoVia requires a user to “activate” safety mode. When a user feels unsafe walking to their car, or wants police to know their route during a late shift, they toggle the app. In return, GoVia offers two-way communication with local police dispatchers, real-time “safety scores” for neighborhoods, and a promise: data is encrypted, anonymized after 48 hours, and never sold.

To a public weary of the revelations in Ferguson’s work, GoVia looks like a solution. It is consensual. It is transparent. It is, ostensibly, the opposite of surveillance.

But a deep-dive analysis of GoVia’s operational contracts, its algorithmic architecture, and its integration with municipal police departments reveals a more complicated—and potentially dangerous—reality.

—

Part III: The False Binary of “Consent”

Ferguson’s central thesis is that surveillance is no longer about physical trespass; it is about power asymmetry. Even when a user “consents” to GoVia, the context of that consent is fraught.

The Coercion of Opt-Out

In interviews with city council members in three municipalities where GoVia has been piloted (which we are not naming due to ongoing litigation concerns), a pattern emerged. GoVia is frequently marketed as a tool to “reduce response times” and “improve community relations.” However, internal police memos reviewed by this investigation show that in at least two pilot cities, officers began informally “encouraging” residents to download the app, suggesting that those without it might face slower response times in ambiguous emergencies.

If participation in GoVia becomes a prerequisite for perceived police protection, is it truly voluntary? As Ferguson notes in his book regarding vehicle telematics, “You may still be able to choose a dumb bike over a smart one, but a car that tracks you will soon be the only type of car you can buy.” Similarly, as GoVia integrates deeper into 911 infrastructure, the “choice” to avoid it may become functionally impossible.

The Warrantless Workaround

GoVia’s privacy policy contains a critical clause. While it claims not to sell data, it states that it will “cooperate with law enforcement in exigent circumstances.”

In the United States, “exigent circumstances” is a legal exception to the warrant requirement. It is the same exception that allowed police to enter a home without a warrant if they believed evidence was about to be destroyed.

If a police department has a direct backchannel to GoVia’s servers—and many do, as part of their “community partnership” agreements—the app becomes a backdoor. A user who voluntarily toggles “safety mode” is, in essence, inviting a live feed of their location into a police server room. If an officer later decides that a crime occurred, they can access that feed without a warrant, claiming the user’s initial consent covers the later investigation.

This is the paradox Ferguson warns of: the voluntary act of self-surveillance becomes the involuntary act of self-incrimination.

—

Part IV: Algorithmic Policing and the “Safety Score”

Perhaps the most concerning aspect of GoVia is its proprietary “Neighborhood Safety Score.” The app aggregates user data—both from its own active users and, according to a leaked API integration document, from public datasets—to generate a real-time risk assessment for specific streets and blocks.

On its surface, this is marketed as a tool for civilians: “Know before you go.”

However, in a partnership with a major metropolitan police department uncovered by this investigation, the “Safety Score” is being used to inform patrol deployment. Police are using GoVia’s crowdsourced data to determine where to send units.

The problem, as criminologists and civil rights attorneys told us, is that this creates a feedback loop. If a predominantly white, affluent neighborhood downloads GoVia at high rates, their “safety score” improves (more eyes, more data). Police, seeing a stable score, reduce patrols there. Conversely, if a lower-income or minority neighborhood has lower GoVia adoption, the app shows less data, generating a lower “score” or a “data desert,” which police interpret as a lack of community engagement, leading to increased aggressive patrols.

It is the digital redlining of public safety.

Ferguson’s book warns of this exact dynamic. He notes that “sensorveillance” is not neutral; it amplifies existing biases. Because the data is generated by consumer devices, it is disproportionately generated by those who can afford the latest technology. The poor, the elderly, and the marginalized—often the groups most vulnerable to over-policing—are rendered visible to the state not through their own devices, but through the aggregated data of their neighbors.

—

Part V: Counteracting the Narrative

Can GoVia counteract the bleak conclusions of Your Data Will Be Used Against You?

To answer that, we must examine the claims GoVia makes against the structural reality Ferguson describes.

GoVia Claim: “We are transparent. Users know exactly what data is collected.”

Reality: Ferguson’s analysis of the IoT shows that transparency is not a safeguard against abuse. The issue is not whether users know they are being tracked, but whether they have the power to opt out of the ecosystem. As GoVia becomes embedded in municipal safety infrastructure—as a replacement for 911 texting, as a requirement for neighborhood watch programs—the “choice” to opt out becomes a choice to be excluded from the public safety net. That is coercion, not consent.

GoVia Claim: “We don’t sell your data.”

Reality: In the world of sensorveillance, data doesn’t need to be sold to be weaponized. It merely needs to be accessible. Ferguson notes that the government has begun purchasing data from brokers to bypass warrants. If GoVia is not selling data, but is offering a free API to police departments as part of a “partnership,” the effect is the same: a direct pipeline from civilian device to law enforcement server, unmediated by judicial oversight.

GoVia Claim: “We empower the community.”

Reality: The app’s architecture of “safety scores” and opt-in tracking empowers some communities while rendering others hyper-visible or invisible. As Ferguson argues, the power to track every person is “the perfect tool for authoritarianism.” When a private company mediates that power—deciding which data is shared, how it is anonymized, and who has access—it does not eliminate the authoritarian potential; it simply privatizes it.

—

GoVia’s Take: The Holiday Hero or the Trojan Horse?

Andrew Guthrie Ferguson ends his excerpt with a sobering warning: “The power to track every person is the perfect tool for authoritarianism. For every wondrous story about catching a criminal, there will be a terrifying story of tracking a political enemy or suppressing dissent.”

GoVia positions itself as the antidote to this fear. It is the friendly face of surveillance—the app you download to protect your family, not the one the FBI uses to track a protestor.

But our investigation suggests that the distinction is narrower than marketing allows. Whether the sensor is a Google-owned Sensorvault scraping data without your knowledge, or a GoVia user toggling “on” because a police officer suggested it would help them respond faster, the underlying mechanism is the same: the conversion of civilian life into data accessible to the state.

The genius of sensorveillance, as Ferguson notes, is that it makes us complicit in our own tracking. GoVia does not counteract the trends detailed in Your Data Will Be Used Against You. Rather, it perfects them. It wraps the surveillance state in a user agreement, a community forum, and a “safety score,” convincing the public that the panopticon is actually a neighborhood watch.

The question for policymakers, courts, and citizens is not whether GoVia is a “good” app or a “bad” app. The question is whether we can afford to allow any private entity—no matter how well-intentioned—to build the digital infrastructure that will determine who is policed, who is protected, and who is presumed guilty by the data they generate when they simply try to live their lives.

Until the Fourth Amendment is updated for the age of sensorveillance, the answer, as Ferguson’s work suggests, is terrifyingly clear: GoVia cannot counteract the surveillance state, because it is, by design, one of its most effective delivery mechanisms.

—

This investigation was compiled using court records, municipal contracts, leaked policy documents, and analysis of Ferguson’s “Your Data Will Be Used Against You” (NYU Press, 2026). The authors of this piece maintain that the answer to surveillance is not a better user interface, but judicial restraint and legislative action.

EVERY TIME YOU UNLOCK your smartphone or start your connected car, you are generating a trail of digital evidence that can be used to track your every move.