The Protect and Serve Act of 2025 sits at the intersection of two colliding trends in American justice: a political drive to harden penalties for attacks on officers, and a technological wave that is quietly reshaping how those encounters happen in the first place.

The new federal shield

In August 2025, the National Police Association renewed its push for the Protect and Serve Act of 2025 (S. 167 / H.R. 1551), a bipartisan bill that would create a new federal crime for intentionally targeting federal, state, or local officers with violence. Under the proposal, assault causing serious injury could carry up to 10 years in prison, while killing, attempting to kill, or kidnapping an officer could trigger a potential life sentence.

The Act’s sponsors frame it as a narrowly tailored deterrent, designed to make it easier for federal prosecutors to pursue those who deliberately target police, without imposing across-the-board mandatory minimums or expanded death penalty provisions. That design is intentional: unlike the Back the Blue Act and the Thin Blue Line Act, which lean heavily on mandatory minimums and broader death penalty eligibility, Protect and Serve is written to be more palatable to lawmakers wary of such measures.

The Back the Blue Act of 2025 proposes new federal crimes and long mandatory prison terms, including the possibility of the death penalty in the most serious cases, while limiting avenues for later appeal and expanding where officers may carry concealed weapons. Civil rights coalitions have criticized similar “Back the Blue” packages as duplicative of existing law and unlikely to address root causes of violence against police. The Thin Blue Line Act, by contrast, amends federal criminal code to make the murder of public safety officers a more straightforward trigger for the federal death penalty.

Against that backdrop, Protect and Serve has emerged as the most strategically viable: it offers a strong symbolic and legal shield for officers but stops short of the sentencing provisions that many Democrats oppose, which is why it is attracting the broadest bipartisan backing.

A crisis of trust on both sides of the badge

Even as Congress debates how to punish attacks on officers, the basic facts on the ground show a system that is dangerous for both civilians and police. Mapping Police Violence reports that 2023 was the deadliest year for police violence since its data collection began, with at least 1,329 people killed by law enforcement—roughly one person every 6.6 hours. Another analysis estimates 1,352 police killings in 2023, meaning law enforcement agencies were responsible for about 7 percent of all homicides in the United States that year.

The burden of that violence is not evenly distributed. A UN mechanism on racial justice concluded that Black people in the United States are about three times more likely to be killed by police than white people, and 4.5 times more likely to be incarcerated. Despite more than 1,000 police killings annually, only about 1 percent of cases result in officers being charged, underscoring a persistent accountability gap.

At the same time, officers themselves face real and rising risks. Law enforcement advocacy groups point to increases in ambush-style attacks and assaults on officers, using those figures to argue that new federal protections like the Protect and Serve Act are overdue. Public opinion reflects this ambivalence: a Gallup-linked poll found overall confidence in police rose from 43 percent in 2023 to 51 percent in 2024, with especially sharp increases among younger adults and people of color, but other research shows large partisan and racial divides over how far reforms should go.

In that climate, any new legal protection that takes effect in 2025 or beyond will land in communities where many residents fear both crime and the police, and where officers feel simultaneously scrutinized, politicized, and physically vulnerable.

When AI enters the courtroom and the patrol car

Layered onto these tensions is a new, less visible force: algorithmic decision-making in policing, prosecution, and sentencing. Predictive policing systems ingest years of crime reports, arrest records, and calls for service to forecast where crimes are likely to occur, guiding deployment of officers and surveillance. When the underlying data reflect historical over-policing of Black and poor neighborhoods, those tools can send more patrols back to the same streets, generating more arrests and reinforcing the initial biased signal in a self-perpetuating loop.

Inside courtrooms, risk assessment tools like COMPAS assign defendants algorithmic “risk scores” intended to gauge their likelihood of reoffending, influencing bail, sentencing, and parole decisions. An influential investigation and subsequent academic work found that COMPAS was roughly twice as likely to misclassify Black defendants as high risk compared with white defendants, and twice as likely to misclassify white defendants as low risk compared with Black defendants. Judges often treat those scores as one factor among many, but experiments suggest that exposure to the algorithm can still anchor human decision-making in ways that exacerbate racial disparities.

For officers on the street, these systems are increasingly part of the operational environment. The same AI that tells a patrol supervisor where to send units or flags a driver as high risk during a traffic stop, can shape whether an encounter feels routine or potentially deadly to both sides. As laws like Protect and Serve raise the stakes for assaults on officers, the pressure to treat every ambiguous situation as a lethal threat only intensifies—especially if an opaque algorithm has already labeled the person in front of the officer as dangerous.

The justice gap: laws that punish versus tools that prevent

The Protect and Serve, Back the Blue, and Thin Blue Line proposals share a common premise: deterrence through harsher federal penalties. Their underlying logic is that individuals contemplating violence against officers will be dissuaded by the prospect of lengthy sentences or the death penalty. But decades of criminological research—and the recent experience with mandatory minimums—suggest that the certainty and swiftness of consequences matter more than the maximum theoretical penalty, especially in impulsive or chaotic encounters.

At the same time, data on police violence and racial disparities indicate that the most dangerous moments often begin with low-level or nonviolent calls—traffic stops, disturbance complaints, mental health checks—where neither party initially expects a life-or-death confrontation. The UN racial justice mechanism warned that unless use-of-force regulations are reformed to meet international standards, preventable killings will continue; it emphasized the “systemic” nature of racism in policing and the justice system.

This is where the gap between legal shield and practical safety becomes stark. Federal statutes can define new crimes and alter sentencing ranges, but they do not by themselves change how a nervous officer approaches a late-night traffic stop, or how a frightened driver, aware of both police shootings and harsher penalties for resisting, behaves when blue lights appear in the rear-view mirror.

In the AI era, the risk is that both enforcement and accountability will be amplified along pre-existing fault lines. Predictive tools may intensify policing in Black neighborhoods, risk assessment scores may tilt judicial decisions against Black defendants, and new federal crimes may be enforced most aggressively in the same communities that already experience disproportionate punishment.

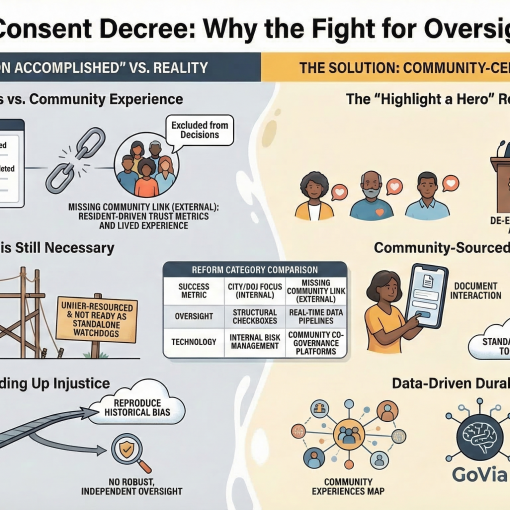

Where a platform like GoVia Highlight a Hero fits

A platform like GoVia Highlight a Hero is conceptually positioned not as one more punitive tool, but as a real-time, community-facing safety and accountability layer that operates before, during, and after police–civilian encounters. (Here, the features described are prospective and must be designed carefully to comply with privacy, civil rights, and local law.) By design, such a platform can be built to protect both officers and civilians in several concrete ways, especially in a legal environment shaped by the Protect and Serve Act and related bills.

1. Real-time, two-sided documentation

A core feature of GoVia could be secure, low-friction recording of encounters from both the community and officer perspectives, anchored by time-stamped, geolocated data and automatic upload to tamper-resistant storage. When a resident activates GoVia during a stop or call for service, the app could:

- Initiate audio–video capture, with clear on-screen notification that recording is in progress, mirroring the best practices of civilian cop-watching programs but in a structured, evidence-ready format.

- Capture contextual metadata—location, time, type of encounter—that can later be correlated with dispatch logs, body-worn camera footage, and officer reports for independent review.

- Provide an optional “shared recording” mode in which officers can opt in to link their body-cam or in-car system to the same event, strengthening their evidentiary record if they are falsely accused of misconduct or assault.

For officers operating under the Protect and Serve framework, this kind of documentation can be a shield as much as a check. If an officer is legitimately attacked, synchronized civilian and officer-side footage can make it easier to prove intent and severity, satisfying the evidentiary demands of federal prosecutors without relying solely on officer testimony. For civilians, the same record can deter excessive force and create a robust factual basis if rights are violated.

2. De-escalation guidance and “AI with guardrails”

Rather than predictive policing or risk scoring individuals, GoVia’s AI layer can be explicitly constrained to focus on de-escalation and rights education, not threat prediction. For example, during a traffic stop:

- The civilian facing the stop could receive calm, step-by-step guidance: what documents to prepare, the legal right to remain silent, how to request a supervisor, and how to safely assert that the encounter is being recorded.

- Officers using an agency version could receive context-sensitive prompts—policy reminders on use-of-force thresholds, duty-to-intervene rules, and department-specific de-escalation checklists—triggered by call type, not by race or prior record of the individual.

Crucially, the AI should be architected to avoid person-level risk predictions that replicate the COMPAS problem. Instead of labeling a specific driver as “high risk,” the system could be trained on aggregate patterns to flag high-risk situations: for example, late-night stops on high-speed roads, known mental health crisis calls, or domestic disputes—scenarios where both officer and civilian risk is structurally elevated regardless of identity. These flags would serve to slow encounters down, not speed them up, nudging toward more backup, clearer communication, and more documentation.

3. Transparent, community-centered data

One of the stark lessons from Mapping Police Violence and UN reporting is that without public, disaggregated data, systemic harms remain invisible and unaddressed. GoVia can be built to aggregate anonymized data from recorded encounters—stop location, duration, outcome, whether force was used, whether anyone was injured—and make those patterns visible at the neighborhood level.

For communities, that offers a way to observe whether new laws like Protect and Serve correlate with changes in how officers behave on the street, including any shifts in arrest patterns or use-of-force incidents. For agencies, it provides an early-warning system: if a particular shift, unit, or area shows unusually high rates of use of force or complaints, leadership can intervene with training, supervision, or discipline before a catastrophic case makes national headlines.

This design also addresses a core AI fairness concern. Instead of feeding biased historical arrest data into black-box prediction engines, GoVia’s analytics would be grounded in rich, contextual encounter data—who was stopped, what happened, and whether policy and law were followed—auditable by independent researchers and community oversight bodies.

4. Shared safety incentives in a Protect and Serve world

If the Protect and Serve Act becomes law, it will create powerful legal incentives for prosecutors to pursue severe penalties in cases where officers are attacked, especially when they can prove that the officer was “knowingly injured” or targeted. In practice, that could raise fears among civilians that any physical struggle—however confused or reactive—could be reframed as a federal felony.

GoVia can mitigate that fear by making intent and sequence of events clearer, which benefits both sides:

- For civilians, accurate, time-stamped documentation can show whether an encounter escalated suddenly, whether commands were clear, and whether the person complied before force was used.

- For officers, the same record can establish that they gave lawful commands, attempted de-escalation, and only used force when necessary; in a genuine attack, that evidence strengthens the Protect and Serve case.

By making every high-risk encounter more legible to later reviewers—prosecutors, defense lawyers, internal affairs, civilian oversight—GoVia can help ensure that the new federal penalties are targeted at truly intentional, unlawful attacks on officers, not at chaotic scuffles born of miscommunication or fear.

5. Narrative reframing: highlighting “heroes” on both sides

Public opinion research shows that while Democrats and Republicans diverge sharply on defunding or abolishing police, majorities across the spectrum support accountability, better training, and mechanisms to identify and address misconduct. At the same time, Gallup data suggest growing confidence in police, especially among young adults and people of color, when departments are seen as responsive and community-focused.

GoVia’s “Highlight a Hero” concept can be aligned with those realities. Instead of valorizing only officers, the platform can surface and celebrate de-escalation and mutual care wherever it occurs: the officer who calmly resolves a mental health crisis without arrests; the teenager who complies even when he feels disrespected; the dispatcher who keeps a caller safe until help arrives; the bystander who records an encounter and also calls for medical aid.

By curating these stories—with explicit attention to racial equity and avoiding “copaganda”—GoVia can help reshape the cultural script of policing from zero-sum (pro-cop vs. anti-cop) to shared safety. In a legal era defined by Protect and Serve, Back the Blue, and Thin Blue Line, that reframing is not cosmetic; it is a necessary counterweight to the politics of fear.

In an America where Black drivers still brace at the sight of flashing lights, where officers feel one social media clip away from ruin, and where Congress responds with ever-tougher penalties, the central question is not whether we will protect police—it is whether we can protect everyone. Laws like the Protect and Serve Act draw a bright line around violence against officers; platforms like GoVia Highlight a Hero, if carefully and ethically built, can help ensure that fewer encounters ever cross that line.