A Cross‑Sector Analysis of Surveillance, Oversight, and Safer Alternatives

In the latter years of the Trump Administration, the Washington, D.C. metropolitan area became a critical testing ground for the integration of artificial intelligence in modern policing. Law enforcement agencies, led by the Metropolitan Police Department (MPD), adopted AI tools such as facial recognition systems, automated license plate readers, and predictive analytics engines to enhance surveillance and accelerate investigations. But the adoption of these technologies was not without consequence — raising legal, ethical, and economic concerns that continue to challenge the balance between security and civil liberties in the nation’s capital.

The AI Infrastructure Behind D.C. Policing

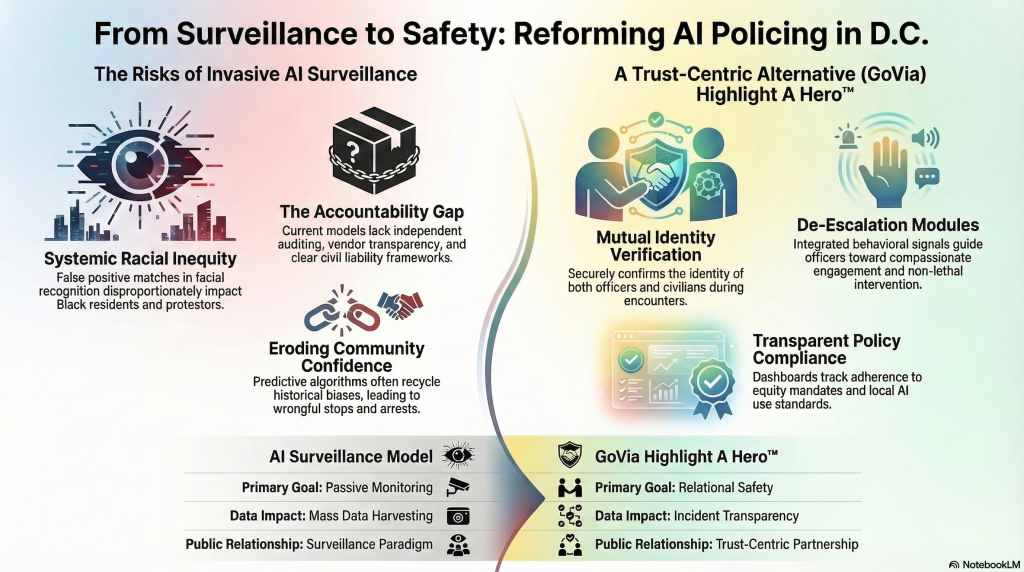

The National Capital Region Facial Recognition Investigative Leads System (NCR‑FRILS) exemplified the promise and peril of AI in law enforcement. Designed to match suspect images against vast databases, it was used in identifying protestors during periods of political unrest. Yet, investigations by The Washington Post and oversight bodies revealed instances of false positive matches, disproportionately impacting Black residents and protest participants.

In parallel, the MPD implemented AI‑powered social media monitoring and license plate tracking technologies, feeding into an expanding network of surveillance data shared among regional agencies. Companies like Flock Safetyand platforms integrated into fusion centers enabled vast data aggregation — blending criminal intelligence with general mobility patterns across the city.

Civil Liberties, Legal Gray Zones, and Policy Gaps

Despite the rapid deployment of these tools, federal law offered no comprehensive oversight framework for law enforcement’s use of facial recognition or algorithmic policing. At the local level, privacy advocates, led by the Electronic Privacy Information Center (EPIC) and supported by the ACLU, pressed for moratoriums on facial surveillance, citing opaque procurement processes and untested accuracy claims.

Key concerns included:

- The absence of independent auditing mechanisms for algorithmic decisions.

- Lack of transparency obligations for AI vendor contracts and data-sharing practices.

- Civil liability ambiguity, leaving victims of false identifications with limited recourse.

These shortcomings, compounded by the political emphasis on “law and order,” led to increasing public distrust. What should have been a modernization effort instead highlighted systemic inequities amplified by automated decision-making.

Where Technology Failed Safety

AI‑driven policing under these conditions eroded community confidence. When algorithms replace discretion, and oversight lags innovation, both officers and civilians face greater danger.

- Officers may engage with suspects based on flawed data or misidentification.

- Citizens — especially in marginalized neighborhoods — encounter heightened risk of wrongful stops or arrests.

- The feedback loops built into predictive policing recycle historical bias, targeting the same communities repeatedly under the guise of objectivity.

How GoVia Highlight A Hero™ Could Bridge the Divide

Amid this policy turbulence, solutions like GoVia Highlight A Hero™ offer an alternative vision — one grounded in real‑time communication, de‑escalation, and verified identification without invasive mass surveillance. The platform, designed to ensure safer encounters between the public and law enforcement, leverages technology not to watch but to connect.

Its core value propositions include:

- Mutual verification features that confirm both the identity of officers and civilians during an encounter.

- Incident transparency dashboards, which securely record interactions and allow authorized oversight without broad data harvesting.

- Integrated mental‑health and behavioral signal modules, guiding officers on compassionate engagement techniques when non‑lethal intervention is required.

- Policy compliance tracking, ensuring that departments adhere to local AI use standards and equity mandates.

In contrast to D.C.’s AI surveillance paradigm, GoVia operates within a trust‑centric model — empowering communities to see law enforcement as partners, not monitors. This dynamic can reduce both litigation risk and public safety spending by preventing escalation before it begins.

Policy Recommendations

To achieve meaningful reform, D.C.’s next generation of policing policies should:

- Mandate third‑party audits and racial equity impact assessments for all AI policing tools.

- Establish federal‑local AI ethics boards to align technology standards across agencies.

- Prioritize community‑controlled technology partnerships (like GoVia Highlight A Hero) that focus on relational safety rather than passive surveillance.

- Implement regulatory sandboxes for safe public‑sector innovation — where transparency, privacy, and accountability evolve alongside capability.

Technology alone cannot guarantee justice. What matters is how it’s governed, and whether the tools of the future serve as shields for safety or mirrors of bias. By embedding empathy and transparency into design, platforms like GoVia Highlight A Hero™ could represent the next chapter of equitable public safety — one that measures success not just in arrests, but in trust restored.